The beginning of the debate:

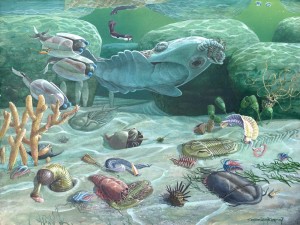

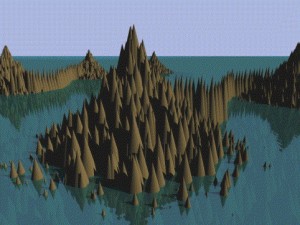

Meyer, of course, started off the debate arguing that the high level of information needed to produce the very wide variety of completely unique body plans (most modern phyla) that show up, all at once, in the Cambrian is essentially impossible for the Darwinian mechanism of random mutations and natural selection to explain. The argument is that “information is running the show”, as Meyer put it, and this information cannot be generated by mindless mechanisms. As an example, Meyer pointed out that qualitatively new protein-coding genes are extraordinarily difficult to evolve because of their “fantastic rarity” in sequence space. Given such rarity, it would simply take too long for random mutations to sort through all the junk sequences in sequence space to find any such beneficial island.

What then is the source of this information? Well, Meyer argued that we must appeal to our uniform and repeated past experience with known ways that complex digital codes are actually produced – which is only by intelligence alone (17:25). There simply is no other known option available to us. So, the “best explanation”, as Darwin himself argued, is the one that is known to actually work to produce the phenomenon in question.

Marshall’s initial response:

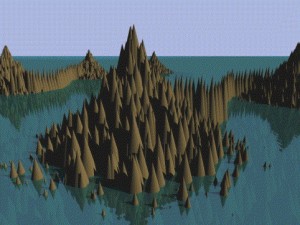

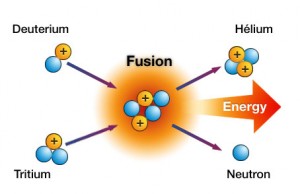

Marshall was very cordial as he started off his initial response to Meyer’s central claims (19:30). When asked to expand on his critique of Meyer’s book, he opened with an illustration of how the source of the Sun’s massive energy output was originally unknown during Newton’s day (now known to be the result of nuclear fusion). Therefore, one could have hypothesized, similar to Meyer’s hypothesis, that because no natural mechanism is currently known, that therefore something outside of nature, some supernatural intelligence or source of energy, is feeding the Sun in some undetectable manner. However, if one “trusts the process of science… eventually we discover things that make the apparently non-sensible sensible. This takes us back to the world of Plato where the sensible is the world of cause and effect.”

Marshall was very cordial as he started off his initial response to Meyer’s central claims (19:30). When asked to expand on his critique of Meyer’s book, he opened with an illustration of how the source of the Sun’s massive energy output was originally unknown during Newton’s day (now known to be the result of nuclear fusion). Therefore, one could have hypothesized, similar to Meyer’s hypothesis, that because no natural mechanism is currently known, that therefore something outside of nature, some supernatural intelligence or source of energy, is feeding the Sun in some undetectable manner. However, if one “trusts the process of science… eventually we discover things that make the apparently non-sensible sensible. This takes us back to the world of Plato where the sensible is the world of cause and effect.”

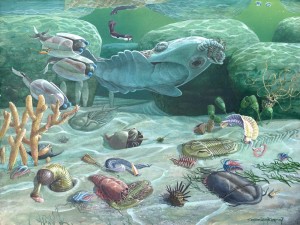

Marshall then went on to suggest that because of this starting bias it is possible that scientists may be missing something when it comes to explaining an event as unusual as the “Cambrian Explosion”. This is why he was so keen to read Meyer’s book, Darwin’s Doubt, since it was coming from an “outside perspective” that might highlight something that mainstream scientists had been overlooking. However, Marshall was disappointed by Meyer’s arguments because he felt that they were more “1980s arguments” – a bit out of touch with more modern discoveries and understandings of how genetics works and how novel complexity evolves. Marshall explained that back in the 1980s, when he was an undergraduate student, there really wasn’t a very good explanation for Meyer’s arguments (as was the case in Newton’s day for the origin of the Sun’s energy). Since then, however, “enormous progress has been made” in understanding these things.

Marshall then went on to suggest that because of this starting bias it is possible that scientists may be missing something when it comes to explaining an event as unusual as the “Cambrian Explosion”. This is why he was so keen to read Meyer’s book, Darwin’s Doubt, since it was coming from an “outside perspective” that might highlight something that mainstream scientists had been overlooking. However, Marshall was disappointed by Meyer’s arguments because he felt that they were more “1980s arguments” – a bit out of touch with more modern discoveries and understandings of how genetics works and how novel complexity evolves. Marshall explained that back in the 1980s, when he was an undergraduate student, there really wasn’t a very good explanation for Meyer’s arguments (as was the case in Newton’s day for the origin of the Sun’s energy). Since then, however, “enormous progress has been made” in understanding these things.

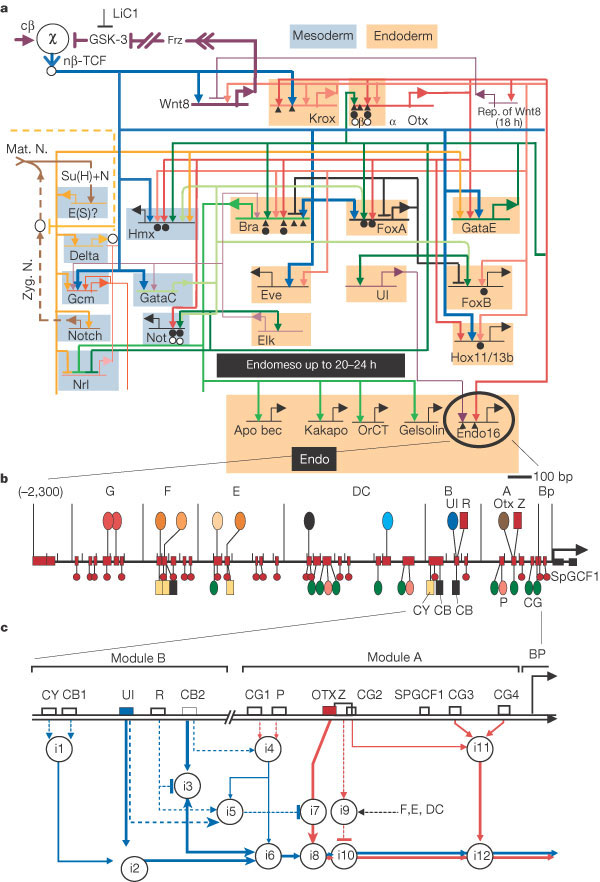

So, what are these key discoveries that undermine Meyer’s claims? Marshall explains that it used to be thought that, “New body plans required new genes” (24:30) – that different types of plants or animals are built with very different basic component parts, requiring a “completely different set of genes” for each new type of body plan. However, since that time it has become apparent that the number of unique genes (protein-coding genes) required to make very different kinds of living things is quite modest, “on the order of 10,000 or so”. Marshall goes on to exclaim, “Staggeringly, all the animals use essentially the same genes! – just deployed slightly differently.” (25:28) Therefore, there is no need for massive genetic evolution to explain the Cambrian explosion anymore. Obviously then, Meyer’s central argument in his book, that new genes cannot be evolved in a reasonable amount of time, certainly not fast enough to explain the Cambrian explosion, is essentially irrelevant given that practically no new genes need to be evolved at all.

Marshall suggested that perhaps Meyer fell into this misunderstanding because Meyer isn’t a biologist. And, for those who are not constantly reading up on the new discoveries in biology, it can be very hard to keep up with the latest information.

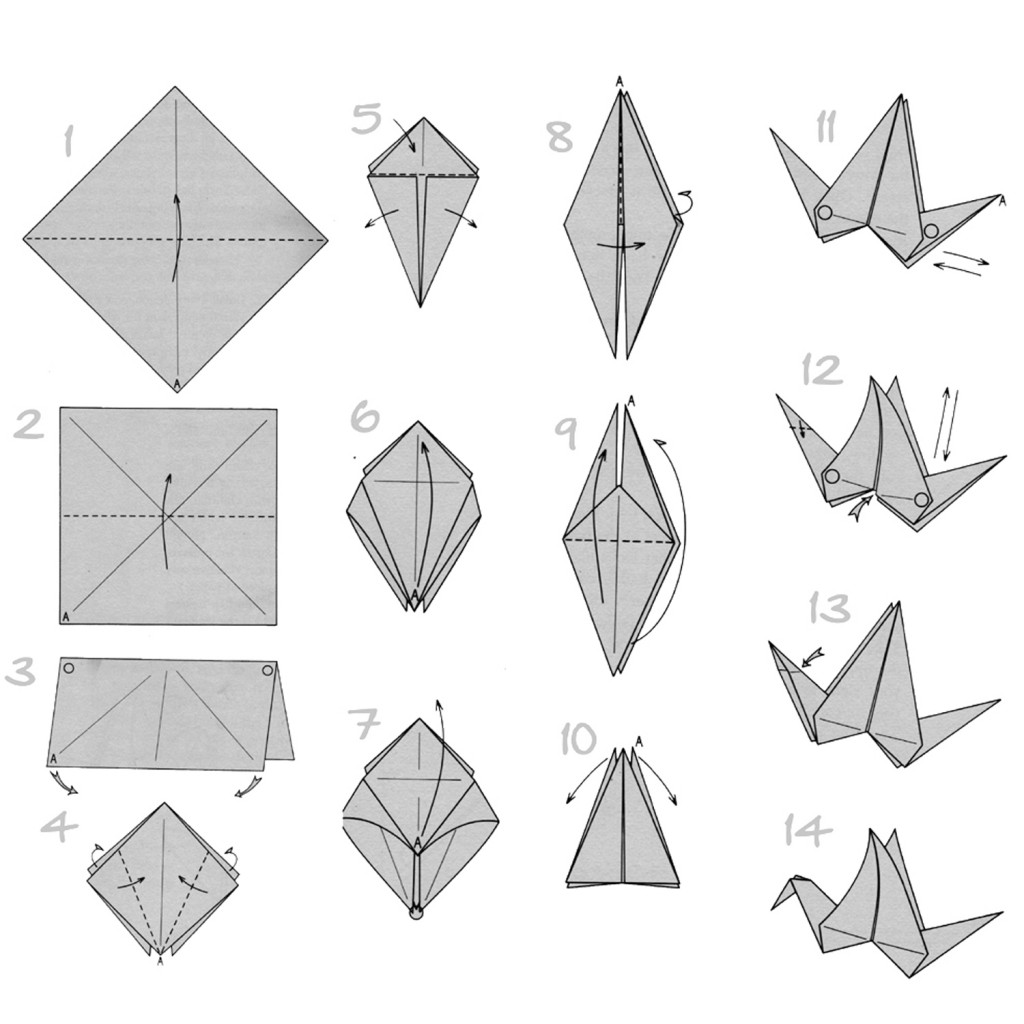

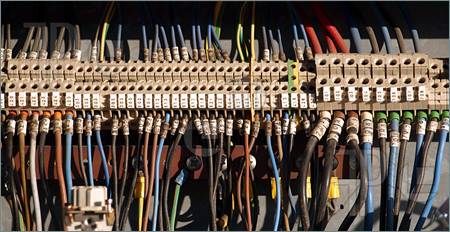

As far a the difference between things that humans create and design (32:30), Marshall argued that this was a “top down” approach, completely different than the ability of life to start as a single cell and then add components to itself as it goes along through time as a “bottom up” approach. This allows life to “grow and rebuild itself” whereas human-designed objects do not. Also, the basic parts of living things are interchangeable, whereas human-designed systems are not (i.e., The parts within a watch aren’t interchangeable with each other and therefore cannot be moved around within it – which isn’t true when it comes to something built with Legos or even language systems or computer systems where the same basic words are used to form entire paragraphs and books and operating systems). Marshall also argued that proteins are far more flexible in their sequence requirements than is the human language system were very little sequence variability is allowed without a complete loss of function. That makes the specificity in a protein “hundreds of orders of magnitude less than the degree of specificity for a computer code or for a written text.”

As far a the difference between things that humans create and design (32:30), Marshall argued that this was a “top down” approach, completely different than the ability of life to start as a single cell and then add components to itself as it goes along through time as a “bottom up” approach. This allows life to “grow and rebuild itself” whereas human-designed objects do not. Also, the basic parts of living things are interchangeable, whereas human-designed systems are not (i.e., The parts within a watch aren’t interchangeable with each other and therefore cannot be moved around within it – which isn’t true when it comes to something built with Legos or even language systems or computer systems where the same basic words are used to form entire paragraphs and books and operating systems). Marshall also argued that proteins are far more flexible in their sequence requirements than is the human language system were very little sequence variability is allowed without a complete loss of function. That makes the specificity in a protein “hundreds of orders of magnitude less than the degree of specificity for a computer code or for a written text.”

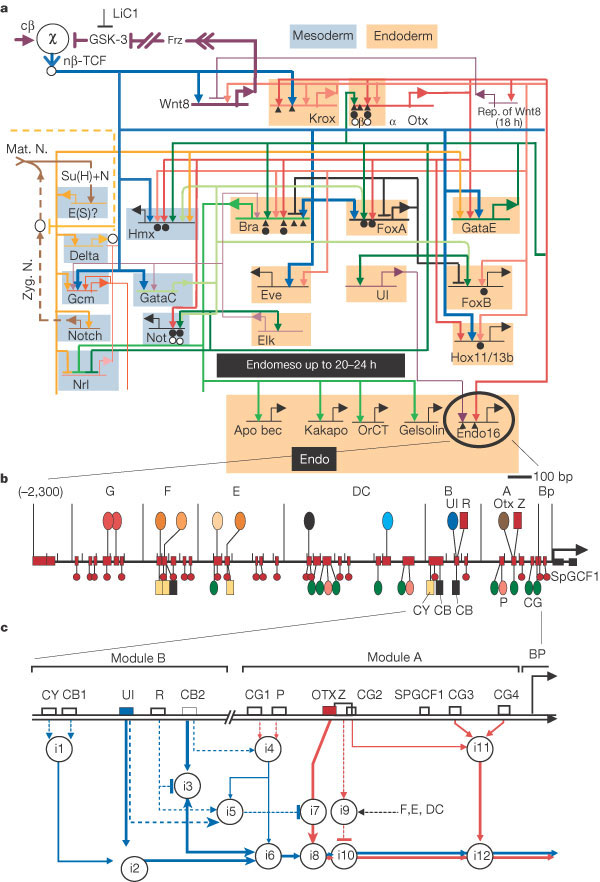

Meyer’s response

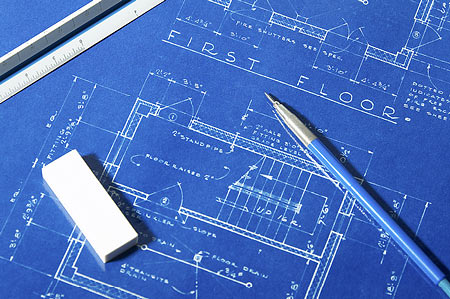

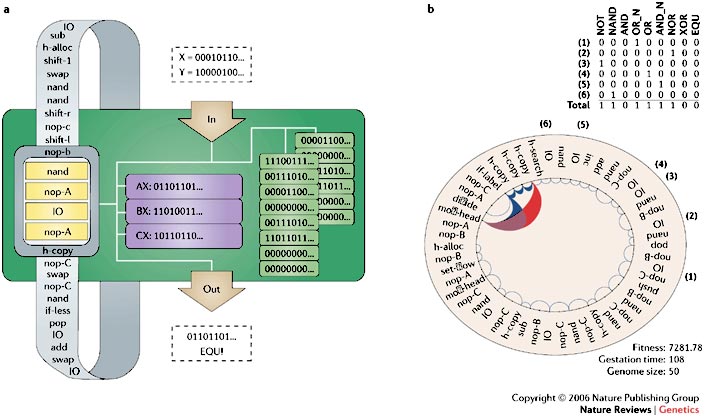

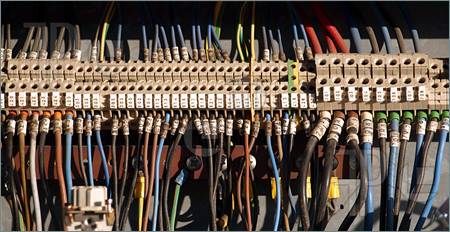

At first approximation Marshall’s arguments seem pretty good – well reasoned and convincing. Meyer countered, however, by noting that he did address several of Marshall’s key arguments in Chapter 13 of his book (36:00) – specifically the “developmental gene regulatory networks” or dGRNs. These control genes that “tell other genes what to do” are involved in coordinating the gene products and their expression “in a circuit-like manner” – like the blueprint for a house. Although Marshall argued in his Science review and in the debate the these dGRNs could have been rewired by evolutionary mechanisms early in the Cambrian period, he ignores the fact that “rewiring” itself would require a lot of informational input that would not be very easy to come by since the vast majority of possible ways that a rewiring could be done would not produce anything beneficial over what came before – exactly the same situation that makes it difficult for novel protein-coding genes to evolve. In other words, circuit rewiring requires multiple coordinated mutations all happening at the very same time to be successful. The work of Eric Davidson has shown that the central sub-circuits of dGRNs cannot be perturbed without “catastrophic effects to the developing organism.” And, any control system that specifies an outcome cannot be made to be significantly more “malleable” or else it will cease to be a control system. By their very nature control systems are “exquisitely constrained” systems.

At first approximation Marshall’s arguments seem pretty good – well reasoned and convincing. Meyer countered, however, by noting that he did address several of Marshall’s key arguments in Chapter 13 of his book (36:00) – specifically the “developmental gene regulatory networks” or dGRNs. These control genes that “tell other genes what to do” are involved in coordinating the gene products and their expression “in a circuit-like manner” – like the blueprint for a house. Although Marshall argued in his Science review and in the debate the these dGRNs could have been rewired by evolutionary mechanisms early in the Cambrian period, he ignores the fact that “rewiring” itself would require a lot of informational input that would not be very easy to come by since the vast majority of possible ways that a rewiring could be done would not produce anything beneficial over what came before – exactly the same situation that makes it difficult for novel protein-coding genes to evolve. In other words, circuit rewiring requires multiple coordinated mutations all happening at the very same time to be successful. The work of Eric Davidson has shown that the central sub-circuits of dGRNs cannot be perturbed without “catastrophic effects to the developing organism.” And, any control system that specifies an outcome cannot be made to be significantly more “malleable” or else it will cease to be a control system. By their very nature control systems are “exquisitely constrained” systems.

Marshall on how dGRNs can evolve

Marshall countered (42:30) with the explanation that, from an evolutionary perspective, it can be difficult to understand that life “unfolds” and as it unfolds it “accretes complexity and sophistication”, which allows for dGRNs to be “less encumbered at the beginning”. Marshall argued that Meyer is “precisely correct” in suggesting thatmodern dGRNs could not be modified between modern body plans – that the gap is far too wide so as to be essentially uncrossable. However, given the process of life as an “unfolding” process, one can “wind back the clock” to when life was made up of just single-celled organisms that then evolved into simplistic colonial organisms – or the “first recognizable animals called sponges”.

Marshall countered (42:30) with the explanation that, from an evolutionary perspective, it can be difficult to understand that life “unfolds” and as it unfolds it “accretes complexity and sophistication”, which allows for dGRNs to be “less encumbered at the beginning”. Marshall argued that Meyer is “precisely correct” in suggesting thatmodern dGRNs could not be modified between modern body plans – that the gap is far too wide so as to be essentially uncrossable. However, given the process of life as an “unfolding” process, one can “wind back the clock” to when life was made up of just single-celled organisms that then evolved into simplistic colonial organisms – or the “first recognizable animals called sponges”.

What is remarkable about sponges is that, “They do not have tissues or organs.” Yet, they have “essentially the same set of genes as a drosophila, a jelly fish, and a human… that have the capacity to make tissues and organs already sitting there.” So, the theory is that different lineages independently evolved different body plans based on the same set of original genes. The body plans themselves did not switch between one type of body plan and another, but each evolved from scratch from the same basic foundation on the ground that had yet to evolve a body plan blueprint. Then, once the body plans are in place, natural selection holds them in place and prevents them from switching between different types of body plans.

The concealment of significant bioengineering problems

Meyer responded (45:50) that the metaphorical language that Marshall used “concealed significant bioengineering problems.” And, most tellingly, he cited Marshall’s own lectures on life’s ability to “unfold” where he admits that this ability of life to unfold is dependent upon pre-existing information in the form of “a few simple rules” that allows for this (a dramatic understatement of the pre-existing informational complexity it would take to get novel phenotypes to “unfold”). In short, always the emergent complexity of developing organisms depends upon prior informational complexity to code for the unfolding or emergent complexities of living things.

Meyer responded (45:50) that the metaphorical language that Marshall used “concealed significant bioengineering problems.” And, most tellingly, he cited Marshall’s own lectures on life’s ability to “unfold” where he admits that this ability of life to unfold is dependent upon pre-existing information in the form of “a few simple rules” that allows for this (a dramatic understatement of the pre-existing informational complexity it would take to get novel phenotypes to “unfold”). In short, always the emergent complexity of developing organisms depends upon prior informational complexity to code for the unfolding or emergent complexities of living things.

Marshal quickly responded, “I think there’s been a subtle shift in ground and I’m not going to deny the points that Steven just made.” (47:40) He pointed out that his primary problem with Meyer’s book was his placing of the evolution of new genetic information (which Marshall evidently equates with protein-coding genes alone) at the time of the Cambrian explosion. If Meyer is in fact arguing that these genes may have come along before the Cambrian period, his current argument for the basis of the Cambrian explosion, based largely on novel dGRNs, is a different argument – which is “fair enough”. The question now is, where does the genetic and epigenetic information come from to begin with? “I think that’s a very very important point.”

To which Meyer responded that he thought that novel genetic information was likely required within the Cambrian period, but that it could have pre-existed the Cambrian to be “parceled out in phases” during the Cambrian period. However, either way the origin of the required information must be accounted for and explained.

Meyer went on to add that DNA is “necessary but not sufficient” to build animals (49:30). That is why dGRNs are so important to explaining phenotypic diversity between various types of animals that all shares essentially the same basic protein-coding genes. It is also being discovered that epigenetic regulation is very important to phenotypic expression as well.

Marshall noted, as this point, “I have only one response to that – You Betcha! That’s it precisely.” (50:00)

So, where does the information come from?

When trying to tackle the main question as to where the required information ultimately comes from, Marshall started off with another illustration. He pointed to the fact that energy is delivered, from the Sun to the Earth, at a very “steady and continuous rate” and the Second Law of Thermodynamics actually tells us that in such an energy flow situation that there is increased order and material cycles. This means that “driven systems explore the improbable.” This means that as strings of DNA and amino acids become longer and longer, the functional properties of these strings will be explored – and “some will turn out to be functional and some to be nothing interesting.” He concluded by arguing, “So, the fact that complexity emerges on a planet like ours is entirely understandable in terms of simple mechanical processes.”

When trying to tackle the main question as to where the required information ultimately comes from, Marshall started off with another illustration. He pointed to the fact that energy is delivered, from the Sun to the Earth, at a very “steady and continuous rate” and the Second Law of Thermodynamics actually tells us that in such an energy flow situation that there is increased order and material cycles. This means that “driven systems explore the improbable.” This means that as strings of DNA and amino acids become longer and longer, the functional properties of these strings will be explored – and “some will turn out to be functional and some to be nothing interesting.” He concluded by arguing, “So, the fact that complexity emerges on a planet like ours is entirely understandable in terms of simple mechanical processes.”

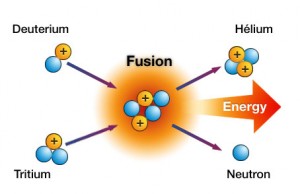

Meyer, of course, responded (52:45) by noting that there is a branch of thermodynamics, known as non-equilibrium thermodynamics, that looks at what happens when energy is pumped through a system – which is popular in the origin of life debate (i.e., abiogenesis debate). The problem is that all of the examples of energy flowing through a system and the order that follows all show the same thing – symmetric or redundant order (i.e., simple patterns, spiral wave currents, convection currents, etc.). For example, as a bathtub is drained the swirl of the water that is formed as it goes down the drain is an example of the simple spiral wave pattern that is produced by this sort of phenomenon. However, this is unlike the kind of “order” that is present in biological systems – which shouldn’t even be classified as “order” in the thermodynamic sense of the term. A better term is “specified complexity” or “functional informational complexity”. This is because there is a difference between meaningful or functional information and “order” in the thermodynamic understanding of order. Meyer pointed out to Marshall, “You’re mixing up two different concepts there.”

Meyer, of course, responded (52:45) by noting that there is a branch of thermodynamics, known as non-equilibrium thermodynamics, that looks at what happens when energy is pumped through a system – which is popular in the origin of life debate (i.e., abiogenesis debate). The problem is that all of the examples of energy flowing through a system and the order that follows all show the same thing – symmetric or redundant order (i.e., simple patterns, spiral wave currents, convection currents, etc.). For example, as a bathtub is drained the swirl of the water that is formed as it goes down the drain is an example of the simple spiral wave pattern that is produced by this sort of phenomenon. However, this is unlike the kind of “order” that is present in biological systems – which shouldn’t even be classified as “order” in the thermodynamic sense of the term. A better term is “specified complexity” or “functional informational complexity”. This is because there is a difference between meaningful or functional information and “order” in the thermodynamic understanding of order. Meyer pointed out to Marshall, “You’re mixing up two different concepts there.”

Can Intelligent Design be a valid science?

Toward the end of the discussion (1:04:00) Meyer and Marshall went back and forth a bit on if Intelligent Design can or can’t be a valid science. Marshall argued that the conclusion of design really doesn’t expand the “map” of science or provide any useful predictions as to how the world works since ID theories have made no positive contribution toward a scientific understanding of how the world works. “Intelligent Design hasn’t offered any new words yet”. He goes on to note that ID might plausibly be viewed as a scientific theory if only it had something solid to offer to contribute to the map of how science operates in a tangible explicit way.

Toward the end of the discussion (1:04:00) Meyer and Marshall went back and forth a bit on if Intelligent Design can or can’t be a valid science. Marshall argued that the conclusion of design really doesn’t expand the “map” of science or provide any useful predictions as to how the world works since ID theories have made no positive contribution toward a scientific understanding of how the world works. “Intelligent Design hasn’t offered any new words yet”. He goes on to note that ID might plausibly be viewed as a scientific theory if only it had something solid to offer to contribute to the map of how science operates in a tangible explicit way.

Meyer responded by saying that he completely agreed with Marshall – that scientific theories need to have wide explanatory power as well as predictive power. Toward this end Meyer noted that he had personally laid out ten predictions based on intelligent design in his previous book,Signature in the Cell.

When questioned as to the actual identity of the designer of life, Meyer argued that it could be known that the designer is intelligent, has a mind, is rational, is capable of generating functional/meaningful information in the form of codes and alphabetic sequences that convey meaning and thoughts and perform functions – very similar to our own abilities. “That’s what we can known scientifically,” explained Meyer. However, when it comes to believing in God as the designer, Meyer admitted that while he is a theist, he has other reasons for believing that God was in fact responsible for life and its diversity on this planet.

When asked if the SETI project isn’t equivalent to the Intelligent Design position being promoted by Meyer (1:11:08), Marshall said that if we were to hear Shakespeare being sung beautifully from Alpha Centori, that we’d all say, “Good heavens! … Wow! There it is.” But that this concept has no bearing on SETI whatsoever. In other words, if we heard an artificial SETI signal, “We’d presume that there is some physical entity somewhere that also had a mind that could produce the signal. But, this is where the analogy breaks down miserably with the intelligent design thing. Yes, a mind is capable of these things, but we build everything in a temporal-spacial framework – everything. So yes, consciousness and minds build these things, but we build it. So, the question is, how can it be built? Where are the factories? What is the signature?… At some point there has to be a corporeal body if we’re going to do this in a scientific way that operates in space and time. And, intelligent design hasn’t been able to point to any of those thing whatsoever. It’s just a disembodied notion of consciousness and mind. The whole point of the Darwinian revolution, the whole point of it, which made Darwin so hesitant, was the fact that natural processes were capable of producing things that looked like, superficially, things that humans make. But, it’s only superficial. When you get into the details, my word, they’re fundamentally different.”

When asked if the SETI project isn’t equivalent to the Intelligent Design position being promoted by Meyer (1:11:08), Marshall said that if we were to hear Shakespeare being sung beautifully from Alpha Centori, that we’d all say, “Good heavens! … Wow! There it is.” But that this concept has no bearing on SETI whatsoever. In other words, if we heard an artificial SETI signal, “We’d presume that there is some physical entity somewhere that also had a mind that could produce the signal. But, this is where the analogy breaks down miserably with the intelligent design thing. Yes, a mind is capable of these things, but we build everything in a temporal-spacial framework – everything. So yes, consciousness and minds build these things, but we build it. So, the question is, how can it be built? Where are the factories? What is the signature?… At some point there has to be a corporeal body if we’re going to do this in a scientific way that operates in space and time. And, intelligent design hasn’t been able to point to any of those thing whatsoever. It’s just a disembodied notion of consciousness and mind. The whole point of the Darwinian revolution, the whole point of it, which made Darwin so hesitant, was the fact that natural processes were capable of producing things that looked like, superficially, things that humans make. But, it’s only superficial. When you get into the details, my word, they’re fundamentally different.”

When asked what evidence could possibly convince him to favor intelligent design, Marshall said that he is fundamentally open to new ideas and the possibility that some evidence might someday be discovered that favored ID. It’s just that he has yet to see such evidence.

Meyer responded (1:15:20) by arguing that it doesn’t really matter if an intelligent mind is embodied or not embodied. Either way, the fact that some intelligent mind of some kind or another was responsible for certain types of phenomena can still be determined scientifically by studying the object itself – such as the sheet music written by a composer which Marshall had cited as an illustration. If someone were to go to the British Museum and look at the Rosetta Stone and say, “Isn’t it wonderful what wind and erosion can do, we would look at that person as daft… We recognize the attributes of intelligence in many other fields of discourse, in archaeology, the SETI research program is a good example. Information is taken to be a Hallmark of intelligent activity in all of these other realms of experience, but in biology we say, ‘No, we can’t go there.’ This is where I think Charles is revealing that he has some deeper metaphysical commitments of his own.”

Meyer responded (1:15:20) by arguing that it doesn’t really matter if an intelligent mind is embodied or not embodied. Either way, the fact that some intelligent mind of some kind or another was responsible for certain types of phenomena can still be determined scientifically by studying the object itself – such as the sheet music written by a composer which Marshall had cited as an illustration. If someone were to go to the British Museum and look at the Rosetta Stone and say, “Isn’t it wonderful what wind and erosion can do, we would look at that person as daft… We recognize the attributes of intelligence in many other fields of discourse, in archaeology, the SETI research program is a good example. Information is taken to be a Hallmark of intelligent activity in all of these other realms of experience, but in biology we say, ‘No, we can’t go there.’ This is where I think Charles is revealing that he has some deeper metaphysical commitments of his own.”

My take on the debate

I thought that the debate was very interesting. I’m obviously biased, but I thought that Marshall lost the debate at the point where he essentially admitted that, at the very least, the “Cambrian Explosion” of a huge variety of very different body plans required the pre-existence of information that would have been needed to code for these body plans. Chastising Meyer that this required information need not have been generatedduring the Cambrian period is hardly relevant to the problem of generating the required information in the first place. Where did this information come from and how was it produced by random mutation and natural selection? Marshall seems to have no idea aside from some vague but ardent kind of faith that it is somehow due to some kind of “simple mechanical process” that spontaneously arises out of non-equilibrium thermodynamics. However, when it comes to the details, he has no idea how to explain how non-equilibrium thermodynamics produces anything beyond very simple repetitive patterns – nothing like the type of functional sequence complexity found in the DNA or proteins of living things or other kinds of language systems or computer codes.

I thought that the debate was very interesting. I’m obviously biased, but I thought that Marshall lost the debate at the point where he essentially admitted that, at the very least, the “Cambrian Explosion” of a huge variety of very different body plans required the pre-existence of information that would have been needed to code for these body plans. Chastising Meyer that this required information need not have been generatedduring the Cambrian period is hardly relevant to the problem of generating the required information in the first place. Where did this information come from and how was it produced by random mutation and natural selection? Marshall seems to have no idea aside from some vague but ardent kind of faith that it is somehow due to some kind of “simple mechanical process” that spontaneously arises out of non-equilibrium thermodynamics. However, when it comes to the details, he has no idea how to explain how non-equilibrium thermodynamics produces anything beyond very simple repetitive patterns – nothing like the type of functional sequence complexity found in the DNA or proteins of living things or other kinds of language systems or computer codes.

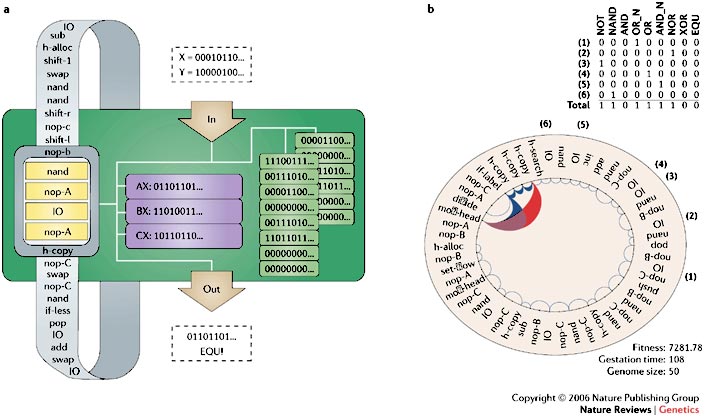

I also found Marshall’s arguments for the uniqueness of living things, compared to human creations, very misinformed. He essentially claimed that the parts within living things are interchangeable and that living things can reproduce and unfold according to developmental regulatory network programming. The fact is, many things humans can and do design are based on interchangeable parts. All human language systems, for example, are based on a limited number of words, equivalent to protein domains in living things, that can be interchanged within paragraphs and books in meaningful/functional ways – exactly as occurs in DNA and protein-based systems (like a structure built out of Legos). Computer codes work the same way as well and, on top of this, can be programmed to reproduce and mutate just like DNA. This is in fact the basis for evolution simulation programs like Avida that Richard Lenski and others work with all the time in an effort to study biological evolution. So, in no sense of the word are the codes and mechanical machines within living things somehow unique from what humans can and have created and continue to create all the time.

I also found Marshall’s arguments for the uniqueness of living things, compared to human creations, very misinformed. He essentially claimed that the parts within living things are interchangeable and that living things can reproduce and unfold according to developmental regulatory network programming. The fact is, many things humans can and do design are based on interchangeable parts. All human language systems, for example, are based on a limited number of words, equivalent to protein domains in living things, that can be interchanged within paragraphs and books in meaningful/functional ways – exactly as occurs in DNA and protein-based systems (like a structure built out of Legos). Computer codes work the same way as well and, on top of this, can be programmed to reproduce and mutate just like DNA. This is in fact the basis for evolution simulation programs like Avida that Richard Lenski and others work with all the time in an effort to study biological evolution. So, in no sense of the word are the codes and mechanical machines within living things somehow unique from what humans can and have created and continue to create all the time.

To add to his problems, Marshall argues that DNA and protein-based systems are orders of magnitude less specified compared to human language systems, but fails to recognize the significance of the degree of protein specificity that is required to produce useful functionality. Consider, for example, the following sentence:

Waht I ma ytring ot asy heer si htat nEgilsh is airfly exflbie oot.

You see, a sequence of letters can be pretty flexible in the context of an English-speaking environment and still get the intended idea across. Of course, the same is true for protein sequences – just as Marshall suggests. Most of the amino acid characters in a protein sequence can be changed, one at a time, without a complete loss of original function. And, if carefully selected, multiple amino acid positions can be changed at the same time, 50% or even 80% on occasion, without a complete loss of original function. This is especially true of the central hydrophobic regions of proteins, “a high fraction” of which can be mutated, at the same time, without a complete loss of original function (Link). However, the exterior amino acids of a protein generally require a higher level of specificity and cannot be so easily mutated, even among the most similar amino acid alternatives, without a significant loss of function.

Of course, multiple completely random mutations that hit a protein at the same time do tend to result in an exponential decline in functionality. However, carefully selected mutations can “compensate” for each other, and result in greater diversity of protein sequences without a complete loss in function.

What this means, then, is that although there is a fair degree of flexibility for most types of protein-based systems, there is also a fair degree of specificity required as well, beyond which the function in question will cease to exist at a selectable level in a given environment. In other words, an average protein will be able to experience an average of 2.2 amino acid substitutions, per amino acid position in the sequence, without a complete loss of function (Link). Considering that there are 20 possible amino acid options, the potential for an average of just 2.2 options per position in a protein sequence is still a fairly sizable restraint on protein flexibility. So, at this point it seems quite clear that the argument that up to 80% of a protein sequence can be changed without a significant loss of function simply doesn’t reflect the true limitations on sequence flexibility for most protein-based systems. This is the reason why, as one considers protein-based systems that require a larger and larger number of amino acids to produce a given function, the ratio of stable/functional proteins within these larger sequences spaces still drops off exponentially – the significance of which Marshall has yet realize.

Marshall’s argument that evolving control networks must be easier than evolving protein-coding genes also highlights his ignorance that the same problems apply to both situations – the very same problems. Just as in protein-based sequence space, the vast majority of possible gene regulatory networks (GRNs) that could be produced, would not be functionally beneficial. And, this is exponentially more and more true with each step up the ladder of functional complexity. The evolutionary steps keep getting exponentially higher and higher – which causes evolutionary progress to slow down, exponentially, with each step up the ladder of functional complexity. Getting a GRN to take the same proteins to build a different system at a higher level of functional complexity requires accurately crossing vast non-functional gaps in the ocean of structural options that would not be beneficial.

Marshall’s argument that evolving control networks must be easier than evolving protein-coding genes also highlights his ignorance that the same problems apply to both situations – the very same problems. Just as in protein-based sequence space, the vast majority of possible gene regulatory networks (GRNs) that could be produced, would not be functionally beneficial. And, this is exponentially more and more true with each step up the ladder of functional complexity. The evolutionary steps keep getting exponentially higher and higher – which causes evolutionary progress to slow down, exponentially, with each step up the ladder of functional complexity. Getting a GRN to take the same proteins to build a different system at a higher level of functional complexity requires accurately crossing vast non-functional gaps in the ocean of structural options that would not be beneficial.

Also, I found it fairly disingenuous for Marshall to argue that we have to discover the actual mechanism for intelligent design, “the factories,” etc., before an object or phenomenon can be recognized as being intelligently designed. He seemed to equate the hypothesis for intelligent design as being essentially equivalent to an argument for “God did it”. Why then would he recognize the argument for SETI as being somehow different? After all, he himself noted that he would accept the SETI signal as being intelligently designed – without having to be given the actual identity of the SETI intelligence or the precise method used to produce the SETI signal (i.e., no factories need to be demonstrated or anything else like that). Why then this double standard when it comes to living things? Why the need to actually be shown the designer at work on the one hand but not on the other?

Also, I found it fairly disingenuous for Marshall to argue that we have to discover the actual mechanism for intelligent design, “the factories,” etc., before an object or phenomenon can be recognized as being intelligently designed. He seemed to equate the hypothesis for intelligent design as being essentially equivalent to an argument for “God did it”. Why then would he recognize the argument for SETI as being somehow different? After all, he himself noted that he would accept the SETI signal as being intelligently designed – without having to be given the actual identity of the SETI intelligence or the precise method used to produce the SETI signal (i.e., no factories need to be demonstrated or anything else like that). Why then this double standard when it comes to living things? Why the need to actually be shown the designer at work on the one hand but not on the other?

As far as Meyer’s explanation of intelligent design as a valid scientific theory is concerned, I thought he covered the points presented quite well. I would only add that the concept that complex meaningful or functional information exists on different levels of functional complexity. This concept highlights the fact that it is exponentially harder to produce something one step up the ladder of functional complexity – regardless of if a new gene or the rewiring of a dGRN is required to achieve this step. The odds that a random dGRN rewiring process, or any other kind of random mutation, will produce anything qualitatively novel that has a greater minimum size and/or specificity requirement decrease, exponentially, as the minimum structural threshold requirements increase in a linear manner. That means, of course that the odds of evolving anything beyond very low levels of functional complexity, by any random process of any kind, even starting with a bunch of higher level systems, are extremely unlikely this side of a practical eternity of time (i.e., trillions upon trillions upon trillions of years).

Still, Marshall maintains hope that someday, like the unknown source of the Sun’s energy in Newton’s day, that some future discovery will be made that will vindicate Darwin. He might not currently know precisely how random mutations and natural selection can generate the high levels of functional information needed to produce life and its diversity beyond very low levels of functional complexity, but he has faith that somehow, someway, the mindless mechanism of random mutations and natural selection was in fact able to do the job. Of course, unlike the situation in Newton’s day were the source of the Sun’s energy was completely unknown, the informational complexity apparent in living things does match a known source of such complexity – i.e., humans and the meaningfully/functionally complex systems humans create all the time.

Still, Marshall maintains hope that someday, like the unknown source of the Sun’s energy in Newton’s day, that some future discovery will be made that will vindicate Darwin. He might not currently know precisely how random mutations and natural selection can generate the high levels of functional information needed to produce life and its diversity beyond very low levels of functional complexity, but he has faith that somehow, someway, the mindless mechanism of random mutations and natural selection was in fact able to do the job. Of course, unlike the situation in Newton’s day were the source of the Sun’s energy was completely unknown, the informational complexity apparent in living things does match a known source of such complexity – i.e., humans and the meaningfully/functionally complex systems humans create all the time.

Why then doesn’t Marshall do what science is supposed to do? – go with the weight of evidence that is currently in hand and recognize that the best explanation that we currently have for the origin of functionally complex information is intelligent design? – because of a fear that this recognition might “allow a Divine Foot in the door“? So what? Where is the “science” behind a theory that is based on what might be discovered in the future that might counter what is currently known about the only viable source of higher levels of functionally complex information? Where is the predictive value in that? Where is even the potential for Marshall’s position to be questioned or effectively falsified? – if he can always appeal to some as yet future discovery to support his position? Sounds like blind faith and wishful thinking to me – certainly not a valid science that is open to testing and at least the potential for falsification. Meyer is obviously correct in suggesting that Marshall has his own faith-based metaphysical position, independent of any empirical evidence or science, that is driving his position on origins.

In any case, I would like to close with a few recent statements from James M. Tour, one of the ten most cited chemists in the world.

Does anyone understand the chemical details behind macroevolution? If so, I would like to sit with that person and be taught, so I invite them to meet with me.

Does anyone understand the chemical details behind macroevolution? If so, I would like to sit with that person and be taught, so I invite them to meet with me.

I will tell you as a scientist and a synthetic chemist: if anybody should be able to understand evolution, it is me, because I make molecules for a living, and I don’t just buy a kit, and mix this and mix this, and get that. I mean, ab initio, I make molecules…

Still, I don’t understand evolution, and I will confess that to you. Is that OK, for me to say, “I don’t understand this”? …

Let me tell you what goes on in the back rooms of science – with National Academy members, with Nobel Prize winners. I have sat with them, and when I get them alone, not in public – because it’s a scary thing, if you say what I just said – I say, “Do you understand all of this, where all of this came from, and how this happens?” Every time that I have sat with people who are synthetic chemists, who understand this, they go “Uh-uh. Nope.”

These people are just so far off, on how to believe this stuff came together. I’ve sat with National Academy members, with Nobel Prize winners. Sometimes I will say, “Do you understand this?” And if they’re afraid to say “Yes,” they say nothing. They just stare at me, because they can’t sincerely do it.

If you understand evolution, I am fine with that. I’m not going to try to change you – not at all. In fact, I wish I had the understanding that you have.

But about seven or eight years ago I posted on my Web site that I don’t understand. And I said, “I will buy lunch for anyone that will sit with me and explain to me evolution, and I won’t argue with you until I don’t understand something – I will ask you to clarify. But you can’t wave by and say, “This enzyme does that.” You’ve got to get down in the details of where molecules are built, for me. Nobody has come forward.

The Atheist Society contacted me. They said that they will buy the lunch, and they challenged the Atheist Society, “Go down to Houston and have lunch with this guy, and talk to him.” Nobody has come! Now remember, because I’m just going to ask, when I stop understanding what you’re talking about, I will ask. So I sincerely want to know. I would like to believe it. But I just can’t.

Now, I understand microevolution, I really do. We do this all the time in the lab. I understand this. But when you have speciation changes, when you have organs changing, when you have to have concerted lines of evolution, all happening in the same place and time – not just one line – concerted lines, all at the same place, all in the same environment … this is very hard to fathom.

I was in Israel not too long ago, talking with a bio-engineer, and [he was] describing to me the ear, and he was studying the different changes in the modulus of the ear, and I said, “How does this come about?” And he says, “Oh, Jim, you know, we all believe in evolution, but we have no idea how it happened.” Now there’s a good Jewish professor for you. I mean, that’s what it is. So that’s where I am.

Link Link